Heads up: MangaDex.zip will close on 2025-06-03. This article will be updated accordingly upon closing. Forum post: https://forums.mangadex.org/threads/2563010/

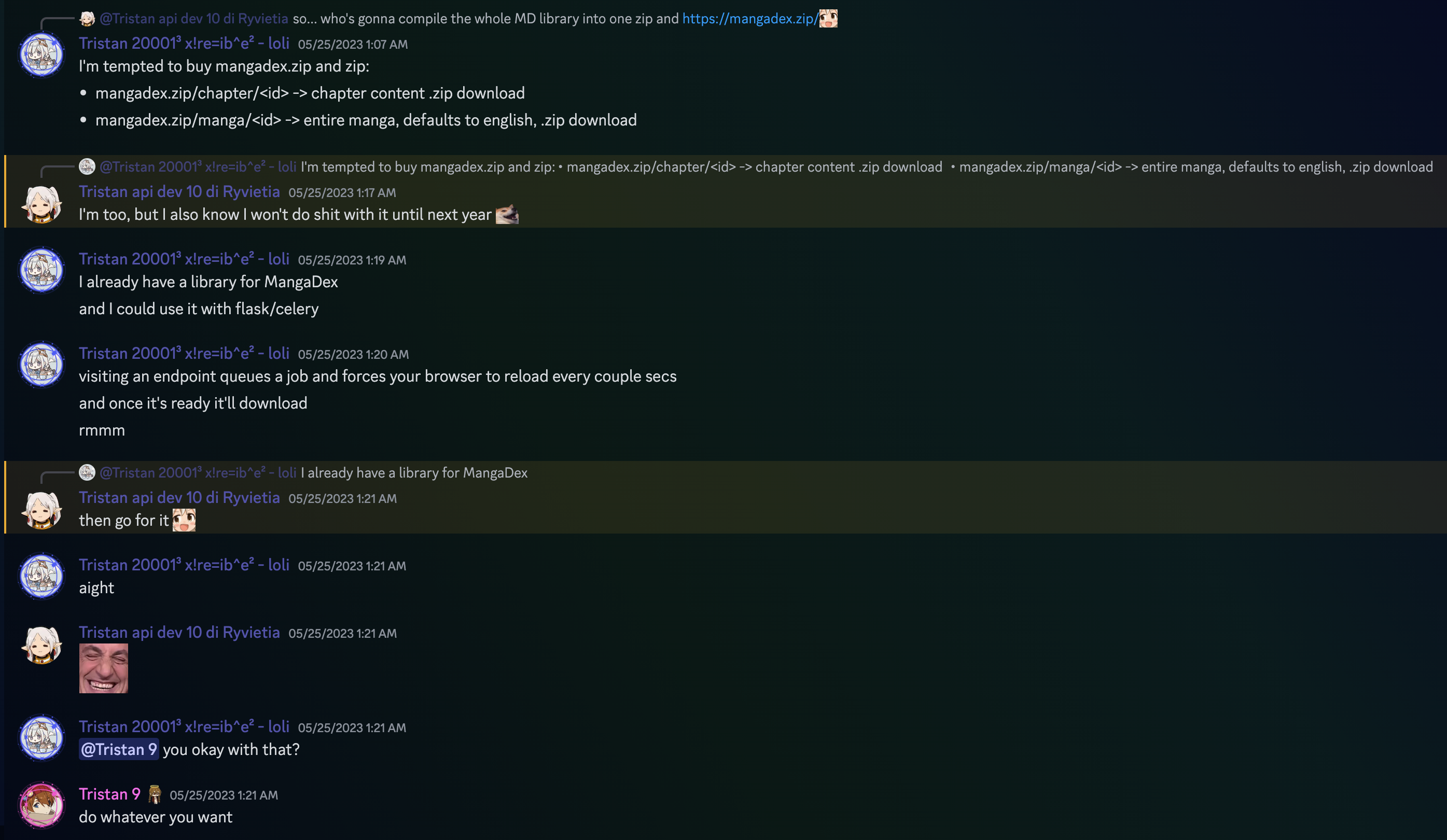

About 3 years ago I started MangaDex.zip, out of a random chit-chat in the MangaDex Discord server.

Basically, you replace “.org” with “.zip” and instantly start downloading what you were looking at: entire manga or individual chapters.

And, you know the drill: it starts with funny thing, everyone says “nice joke”, everyone laughs at how you bought a .zip domain for 25 bucks… except people actually started using it.

The code was open-source for the most part and updated until 2024. Starting mid-2024, the open-source version was abandoned from GitHub (as well as my other personal projects) in favor of my own Git server. The improved versions of the app were not made public as they integrated tightly with my infrastructure solutions, which are private.

Here are the approximative stats over the years:

| Type | 2023 | 2024 | 2025 | 2026 (so far) |

|---|---|---|---|---|

| Manga requests | 900 | 8750 | 33500 | 6400 |

| Chapter requests | 3100 | 26500 | 85000 | 19000 |

| Manga API | 10 | 800 | 9300 | 1450 |

| Chapter API | N/A | N/A | 4000 | 900 |

| User download rate | 77% | 81% | 98% | 99% |

| Bot rate | N/A | 3% | 1% | (none) |

N/A means that the data wasn’t collected. For the Chapter API, it was due to a bug in the code that prevented stats from writing to disk. For the bot rate, monitoring started in late 2023.

With the average manga download size being a bit over 1GB, we were looking at approximatively 52PB of combined egress (to users) in 2025. Let’s dive in quick aspects of how it worked.

Server-side infrastructure

MangaDex.zip had 2 components bundled in the same app: the frontend (Queue Client) and the backend (Queue Worker). A node could run either, or both, depending on the use case.

It was designed to be stateless and required no database. The queue client had a list of workers it could use. A queue worker could be used by multiple clients. High availability was managed by HAProxy for the frontend, and each backend had a readiness probe (max group/tasks, free space minimums, etc.).

When initiating a download, the queue client would pick the next free worker to send the task to. If none were free, the less busy worker would be picked. Workers are sorted by priority; this administrative priority was up to the administrator (me) and determined by the network conditions, etc. On completion of a download by the worker, there were 2 possibilities: proxy the download to the worker or send the user to the worker. This was also determined by the administrator, and to use if and only if the server is not accessible from the internet, as it has a bandwidth drawback.

With the project gaining traction and going up in use with no revenue in sight, I had to make some research to save bandwidth and API calls to MangaDex, as the API calls are limited.

Downstream caching and request bundling

This was relatively simple: I needed a way to make sure that downloading the same content would not end up in more calls to upstream.

Instead of deleting the task after its TTL had expired, nodes would move it to a S3 bucket on local infrastructure, named by what they identify and not the task ID (e.g. `/chapter/a13f1f4f-b130-4aa4-8594-a986d8248c40). This would allow workers to check for a central source of truth. Manga objects had a TTL of 86400 seconds (1 day), which each request adding 3600s to the TTL. Chapter objects did not have a TTL. To prevent DMCA claims (more on that later), this S3 storage was proxied and only accessible with a valid task ID. A worker could lookup the S3 storage, determine the TTL if need be, and send the data directly to the user.

Additionally, I used task bundling to coerce different tasks for the same object into the same task. When checking for available workers, the frontend would check each worker’s task metadata to compare the UUIDs. If an ongoing or completed task was found, the user would be redirected to this task instead of creating a new task.

Upstream caching

Upstream caching consisted of “replacing” the MangaDex upstream by a custom server. By effectively caching all media, downloads from upstream (the proxy) to any worker would be faster.

The caching server supports pinning for specific titles with content-awareness. The most frequent chapters would be kept at the top of the stack (similar to a LRU cache). If an entire manga was frequently downloaded, the caching server would notice (thanks to hints from the worker about the kind of task) and cache accordingly.

By "replacing", I actually mean checking the caching upstream first, then if empty or no response, race upstream. API calls were still handled by the worker itself, as the primary source of bottleneck for the MangaDex API’s rate limiting system is the number of public IPs hitting it. The more public IPs, the more requests you can send per a specific time period.

If the data was absent from the caching upstream at the moment of checking, the caching upstream would receive the data from the worker for storage.

Mobile user detection

For mobile users (detected via the viewport and/or useragent), the light option would default to true if unset. If set, it would override the default choice.

This allowed to reduce the data to the users, as mobile users have more space constraints than desktop users and usually cannot notice the difference on small screens. Mobile users represented less than 10% of the userbase. Still, saving here and there is important, and as the developer of a product it is important to set good defaults.

Note: downloading “light” chapters would disable caching mentioned in both Downstream caching and request bundling and Upstream caching, as light=1 downloads represented less than 5% of the download mass.

Why end it?

It’s a mix of everything. There are multiple reasons, but the first one is that I’ve been hosting this myself. I’m the only maintainer of the project (apart from a couple GitHub contributions before moving to my own Git), and I don’t really have the time to maintain it anymore.

Then there’s infrastructure costs. With the increasing costs (thanks, AI) I cannot afford to maintain 5 public nodes of this service on public cloud, on a project that does not make money (not even to pay for the hosting costs). The entire infrastructure is entirely hosted by Nyakuten:

- 1 frontend + 1 failover frontend

- 5 nodes with dedicated 1G public transit for the app, 2TB of storage for caching and 500G for task data

- 4TB of task cache on a Ceph Object pool

- 8TB of upstream cache on a CephFS pool The Ceph storage clusters are backed by me and are a byproduct of past infrastructure work, while half of the nodes are hosted in public cloud facilities (Hetzner and Scaleway).

No more MangaDex@Home

I mainly joined MangaDex in 2021 because I was motivated by the MangaDex@Home project, a distributed caching infrastructure. Now that MDAH is permanently dead with no hope of ever seeing it again, this means that there is no longer a hope of seeing fast, unrestricted upstream calls.

I updated MangaDex.zip multiple times while MDAH was dying to remove it from the servers to contact — and the death of MDAH is what rendered MangaDex.zip slow, which forced me to adapt (reduce concurrent downloads, etc.).

DMCA requests

I have been tanking multiple DMCA requests. This started with the MDAH project, but did not cease with MangaDex.zip as the different IP and right holder companies accuse me of hosting pirated content.

I had to remove content from the tasks (for the emails that have been dealt with while the cache TTL hadn’t expired), and even blacklist entire titles from being downloaded.

MangaDex itself

For the past couple of years, MangaDex has been taking a direction that I am clearly opposed to. It first started by replying to DMCA requests, a decrease in available content and an increase of policies.

This is not the kind of ideology I want to endorse or support, but while I support the community, I will no longer throw money out the window for a website that is trying to go corporate, just like Crunchyroll did.

Outro

With around 200k downloads, MangaDex.zip is going away for an indefinite period of time (which will mostly be unlimited). The domain will stay up as long as Squarespace isn’t trying to empty my wallet over a cursed TLD, unless someone worthy comes to me.

Regarding contact, there’s some info at the front page if you want to discuss taking over the project or domain for some reason. I’m open to discussing this!